You write a prompt. You hit enter. The response comes back, and it's fine. Not great. Not what you had in mind. So you tweak a word, try again, get another mediocre result, and repeat the cycle three more times before giving up and accepting whatever the AI spits out.

This is how most people use AI tools. They blame the model when the real problem is the prompt. An AI prompt evaluator fixes this by analyzing your prompt before you send it, catching the issues that lead to weak outputs.

You Can't Proofread Your Own Prompts

There's a reason spell checkers exist, even though everyone knows how to spell. Your brain autocorrects what you've written. You read what you meant, not what you typed. Prompts have the same problem, but worse.

When you write "create an SEO-optimized blog post in a conversational style", your brain fills in everything you left out. You know which keywords you're targeting. You know what "conversational" means to you. You know who your audience is. But none of that context made it into the prompt. The AI gets the words on screen and nothing else.

This blind spot is why experienced AI users still write prompts that score poorly. It's not a knowledge gap. It's a perception gap. You can't objectively evaluate instructions you just wrote because you already know the answer to every question the AI is about to ask. A prompt checker catches what your brain skips over, the missing context, the vague instructions, the assumptions you didn't realize you were making.

Content creators miss audience details. Developers leave out edge cases. Marketers write "make it engaging" without defining what engaging means for their specific audience. The pattern is the same across every role: people know what they want but don't transfer that knowledge into the prompt.

What an AI Prompt Evaluator Actually Does

A prompt evaluator reviews your input and scores it based on how likely it is to produce a good response. Think of it as a second pair of eyes that only looks at prompt quality.

It checks for things like clarity, specificity, structure, and whether you've given the AI enough context to work with. Then it flags what's weak and tells you how to fix it. Instead of guessing why ChatGPT gave you a generic answer, you get a specific breakdown before you even hit send.

The AI prompt evaluator helps you bridge the gap between a vague prompt and a precise one without becoming a prompt engineering expert. A prompt like "write me a blog post about marketing" will give you filler content every time. One that specifies the audience, tone, keywords, and output format will give you something usable on the first try.

AI Prompt Evaluator in Action: Before and After

Theory is easy to nod along with. Results are harder to ignore. Here's a real prompt evaluated by SpacePrompts' AI prompt evaluator tool, followed by the fixed version after applying the feedback.

The Original Prompt (Score: 6/10)

You are an expert blog writer. Create an SEO-optimized blog post that's engaging and valuable.

REQUIRED INFO:

- Blog topic: [______]

- Target audience: [______]

RULES:

✓ Start with an engaging hook

✓ Use clear headers (H2, H3) for structure

✓ Write in a conversational, easy-to-read style

✓ Include actionable takeaways

✗ No keyword stuffing

✗ No generic AI phrases like "In today's digital landscape"

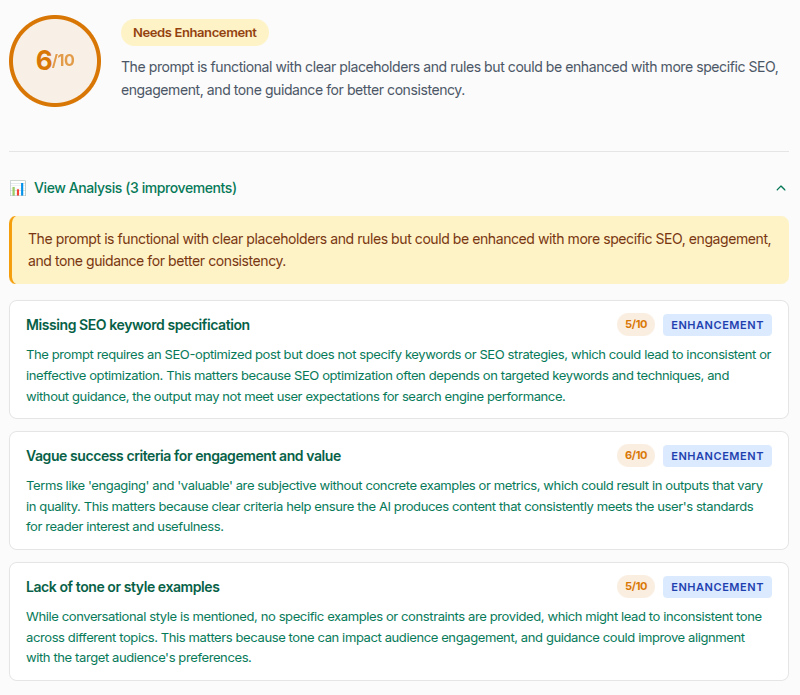

Generate a well-structured blog post (800-1200 words) with introduction, body, and conclusion.This looks reasonable. It has structure, placeholders, and clear rules. Most people would use it as-is. But the evaluator flagged three specific problems:

Missing SEO keyword specification (5/10). The prompt asks for SEO-optimized content but never says which keywords to target. The AI has to guess, and guessing leads to inconsistent optimization.

Vague success criteria (6/10). Words like "engaging" and "valuable" are subjective. Without concrete examples or measurable standards, the AI has no target to aim for. What's engaging for a developer audience doesn't look the same as what works for casual readers.

Lack of tone or style examples (5/10). "Conversational style" means something different to everyone. Without a reference point or specific descriptors, the AI picks its own interpretation, which might not match yours at all.

Each of these issues is invisible when you re-read your own prompt. You already know the keywords you want. You already have a mental picture of "engaging". Your brain automatically fills in those blanks. The prompt quality checker catches what you can't see.

The Improved Prompt (Score: 9/10)

After applying the evaluator's feedback, the same prompt looks like this:

You are an expert blog writer. Create an SEO-optimized blog post that's engaging and valuable.

REQUIRED INFO:

- Blog topic: [______]

- Target audience: [______]

- Primary SEO Keywords/Key Phrases: [______]

- Desired Tone & Style: [e.g., Casual & Relatable, Authoritative & Expert, Inspirational & Motivational, etc.]

RULES:

✓ Start with a strong, engaging hook (e.g., a surprising stat, a relatable question, a bold statement).

✓ Use clear, hierarchical headers (H2 for main sections, H3 for subsections) for structure and scannability.

✓ Write in a conversational, easy-to-read style as specified above. Vary sentence length and use active voice.

✓ Include actionable, practical takeaways the reader can implement immediately.

✓ Integrate the provided keywords naturally into headers and body text where contextually relevant.

✓ Aim for content that is valuable by solving a specific problem, answering a key question, or providing unique insights for the target audience.

✗ No keyword stuffing. Keyword use must feel organic and reader-first.

✗ No generic AI phrases like "In today's digital landscape", "In the ever-evolving world of", or "This comprehensive guide".

OUTPUT FORMAT:

Generate a well-structured blog post (800-1200 words) with the following sections:

1. **Introduction:** Hook the reader, introduce the topic's relevance to the target audience, and state what they will learn.

2. **Body:** Use H2 and H3 headers to break down the topic into logical, digestible sections. Support points with examples, analogies, or data where helpful.

3. **Conclusion:** Summarize the core message and end with a strong, memorable final thought or call-to-action that reinforces the value provided.The difference? Every gap the evaluator flagged is now filled. Keywords have a dedicated field. "Engaging" is defined with specific examples (surprising stat, relatable question, bold statement). Tone offers concrete options rather than a vague label. The output format specifies exactly how the AI should structure each section. Nothing is left for the AI to assume.

That's what a prompt evaluator does in practice. It turns a 6/10 prompt into a 9/10 by surfacing the gaps you can't see yourself.

From Evaluation to Enhancement: Fixing Your Prompts

Once you've identified the weak spots, you have two paths to fix them.

You can rewrite the prompt yourself using the feedback. This is the best approach for learning, because you internalize the fixes and apply them naturally to future prompts. The evaluation gives you a specific list of what to address, so you're not guessing at improvements.

Or you can feed the prompt into a prompt enhancer to handle the rewriting. A prompt enhancer takes your original prompt and the identified weaknesses, then produces a stronger version with the gaps filled in. SpacePrompts has a Prompt Enhancer that can do this once you've signed up (free account works), or you can use whichever enhancement tool fits your workflow.

The evaluator-to-enhancer workflow is a practical loop: evaluate, identify the issues, enhance, then evaluate again to confirm the improvements landed. A few rounds of this and you'll notice your first drafts getting stronger on their own.

Our AI Prompt Evaluator is free and works right in the browser. It handles prompts for any AI model, whether that's ChatGPT, Claude, Gemini, or something else.

Build the Habit of Prompt Analysis

The real value of an AI prompt evaluator isn't the score. It's the feedback loop. Every time you evaluate a prompt and read the suggestions, you start recognizing the patterns that make prompts work. After a few weeks, you'll catch most blind spots yourself before running the evaluation.

If you're serious about getting better outputs from AI, start by checking the prompts you're already using. Run a few through the SpacePrompts Prompt Evaluator and see where you stand. Most people find gaps they never knew were there.